Responsible AI: What It Is, What It Demands, and What UK Businesses Must Get Right in 2026

Artificial intelligence is supposed to be the UK’s next great economic lever. The government has committed £14 billion in private investment, adopted every recommendation of the AI Opportunities Action Plan, and framed AI as the engine of a decade of national renewal.

Yet only 1 in 6 UK businesses are currently using AI. 80% have no active plans to adopt it.

The assumption is that cost, skills, and complexity are what are holding businesses back. The data says otherwise.

When UK businesses were asked to rate the significance of their barriers to AI adoption, ethical concerns ranked first. Above cost. Above regulation. Above everything.

That is not a technology problem. That is a trust problem. And trust, in AI, has a name: Responsible AI.

What Is Responsible AI?

Responsible AI is the practice of designing, building, deploying, and monitoring AI systems in a way that is fair, transparent, accountable, safe, and trustworthy — not just in a controlled environment, but in the real world, where real decisions affect real people. It is not a philosophy. It is an operational discipline. Every choice made during development — what data to use, how outputs are validated, who is accountable for errors — either contributes to a responsible system or quietly undermines one.

It is worth separating it from a term it is frequently confused with. Ethical AI asks whether an AI system is moral. Responsible AI asks whether it is governed. A system can be built with the best intentions and still cause harm if the governance is absent. Most AI failures documented in the UK to date were not caused by bad intent. They were caused by insufficient consideration of the system’s requirements before deployment.

That is precisely what this article addresses.

Why Responsible AI Is No Longer Optional for UK Businesses in 2026

UK businesses are not avoiding AI because of cost or complexity. They are avoiding it because they do not trust it — and neither do the people they serve. The consequences of getting this wrong are no longer theoretical.

1. Businesses Are Avoiding AI Over Ethics

When UK businesses not adopting AI were asked to rate the significance of each barrier, ethical concerns ranked as the most significant obstacle, cited by 80% of respondents. That placed ethics above high costs (76%) and unclear regulation (72%).

This is not a fringe concern. It is the dominant one. And it does not go away once a business starts using AI. 48% of businesses already using AI worry that it could negatively affect employees’ critical thinking. 42% see legal risks. 58% are concerned that it could reduce business creativity.

The businesses holding back are not being overcautious. They are sensing — correctly — that deploying AI without the right foundations carries a risk they cannot yet quantify. Responsible AI is what gives that risk a structure and a solution.

2. The British Public Does Not Trust Unaccountable AI

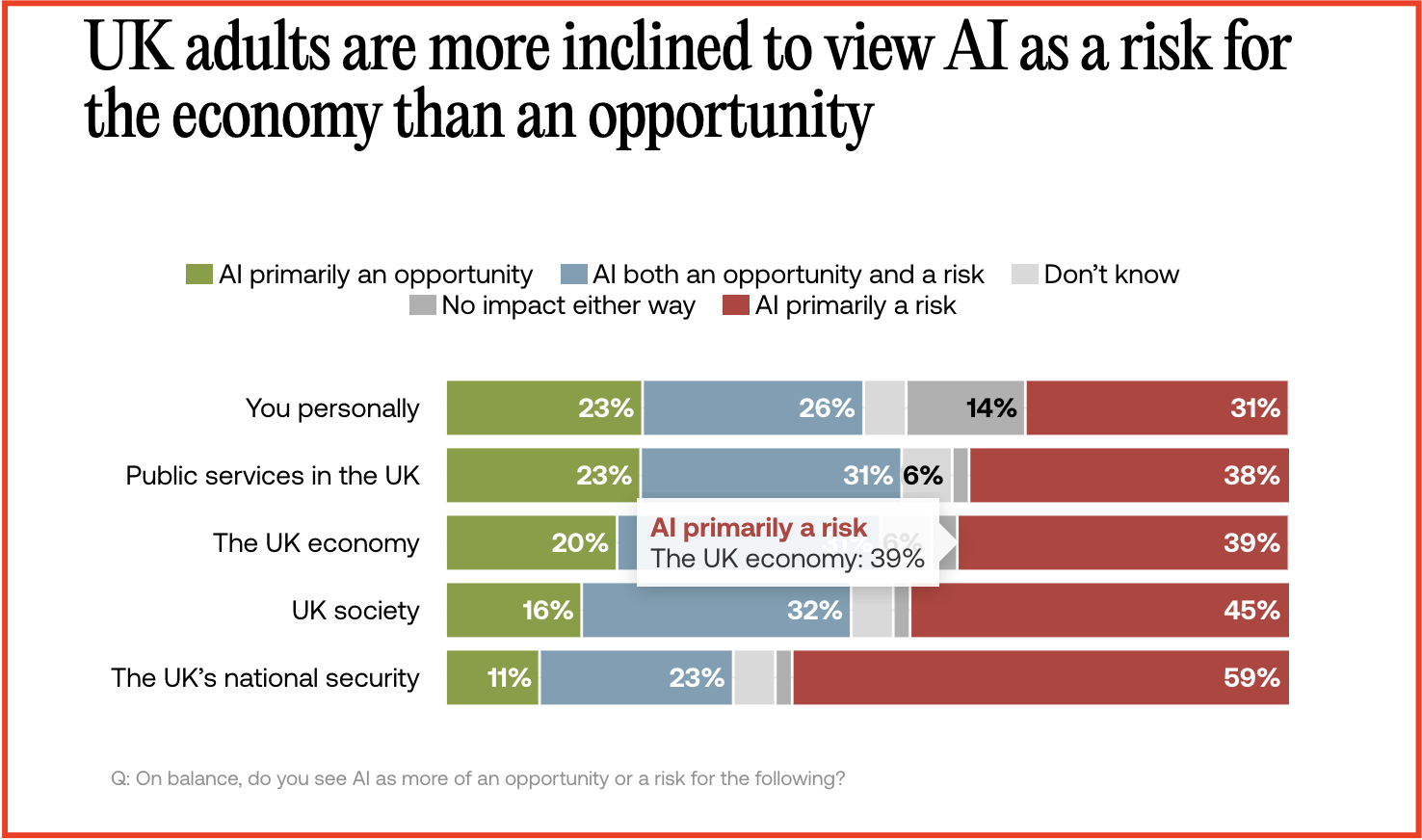

The end users of every AI-powered product or service built in the UK are the same people expressing the deepest reservations about it. 59% of UK adults view AI as a risk to national security. 45% see it as a risk to society. 39% see it as a risk to the economy. The three most cited personal barriers to engaging with AI are lack of trust, privacy concerns, and ethical worries — in that order.

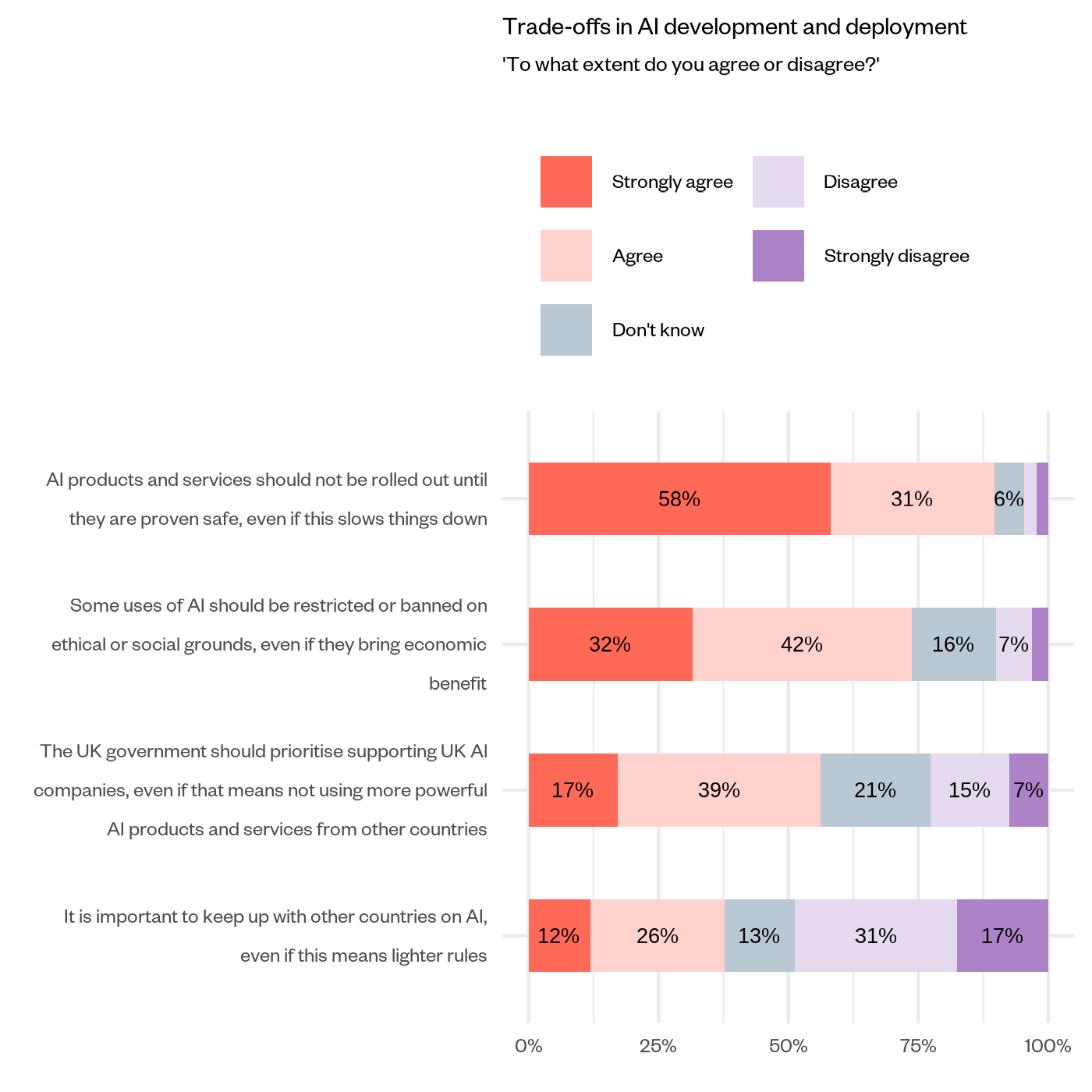

Public demand for governance is not softening with time. 72% of UK adults say laws and regulation around AI would increase their comfort with the technology — up 10 percentage points since 2023. 89% believe safety should come before speed, even if that means slowing AI development down.

For any business building AI-powered products or services, this is not an abstract social concern. It is a direct commercial one. The people you are building for have already decided what they expect. The question is whether what you are building meets that expectation.

3. UK Regulators Are Actively Closing the Gap

The UK has no single AI law. But that does not mean there are no consequences.

The ICO, FCA, Ofcom, and CMA are each applying the UK Government’s five AI principles within their existing legal powers — covering data protection, financial services, online safety, and competition, respectively. In June 2025, the ICO published its AI and biometrics strategy, committing explicitly to a statutory code of practice on AI and automated decision-making.

The direction of travel is clear. What is currently expected is becoming enforceable. Businesses that treat Responsible AI as a future concern are making a decision today that their regulator may revisit tomorrow.

4. The Consequences Are Already Playing Out

The risks of irresponsible AI are not waiting for regulation to catch up. They are already documented, already public, and already attached to real organisations.

In November 2024, the ICO audited AI-powered recruitment tools used by UK employers and found systems that inferred candidates’ gender and ethnicity from their names — filtering people out without their knowledge or consent. 296 recommendations were issued. All were accepted.

Around the same time, a freedom of information request revealed that an AI system used to assess universal credit claims was producing errors at a statistically significant rate. For claimants in financial difficulty, an automated error is not an inconvenience. It can mean missed rent or going without food.

Neither organisation set out to cause harm. Both deployed AI without the foundations that Responsible AI demands.

How to Build Responsible AI: 7 Demands Every UK Business Must Meet in 2026

Building AI responsibly is not a checklist you complete before launch. It is a set of non-negotiable demands that must be addressed at every stage — from the first design decision to long after the system goes live.

Each demand is grounded in one of seven core principles that define what responsible AI actually requires: Fairness, Transparency, Explainability, Accountability, Safety, Privacy, and Human Oversight.

Get any one of these wrong, and the consequences are not abstract. They are operational, reputational, and increasingly regulatory.

1: Fairness — Find the Bias Before Your System Does

Bias does not announce itself at launch. It is embedded quietly in training data, model design, and the choices made about what to optimise for — and it surfaces only when real people are affected by outcomes they cannot explain or challenge.

Most organisations assume fairness is the default. It is not. Fairness means actively testing AI outputs across different demographic groups, examining training data for historical imbalances, and building correction mechanisms before deployment — not after a complaint arrives.

The consequences of skipping this step are already documented in the UK. In November 2024, the ICO audited AI-powered recruitment tools used by UK employers and found systems inferring candidates’ gender and ethnicity from their names, filtering people out without their knowledge or consent. As ICO Director of Assurance Ian Hulme stated: “AI can bring real benefits to the hiring process, but it also introduces new risks that may cause harm to jobseekers if it is not used lawfully and fairly.”

296 recommendations were issued. All were accepted. The organisations involved did not set out to discriminate. They simply did not look hard enough before they launched.

2: Transparency — Make Sure Your AI Can Show Its Work

Opacity in AI is not a neutral position. When a system makes a decision that affects someone — a rejected application, a failed screening, a denied claim — and cannot explain how it reached that outcome, it does not just create confusion. It creates liability.

Transparency operates at two levels that must both be addressed. External transparency means telling the people your AI affects that it was involved and what it concluded. Internal transparency means ensuring your organisation can audit the system’s behaviour when something goes wrong. Most businesses focus on the first and neglect the second — until a regulator asks a question they cannot answer.

The UK Government’s AI White Paper identifies appropriate transparency and explainability as one of its five cross-sectoral principles, and the ICO’s guidance on explaining AI decisions makes clear that organisations must be able to communicate how and why an AI system reached its output in a way that is meaningful to the person it affects — not just technically accurate to the team that built it.

Transparency is not a documentation exercise. It is a design decision that must be made before a system is built, not after it is questioned.

3: Explainability — Build AI That Can Justify Every Decision It Makes

Transparency tells people that AI was involved. Explainability tells them why it reached that conclusion. These are not the same thing — and confusing them is one of the most common and costly mistakes organisations make when deploying AI in high-stakes contexts.

A system that cannot justify its outputs to the people it affects is not just a governance problem. It is a trust problem. In recruitment, credit, healthcare, and benefits — anywhere a decision materially affects someone’s life — an unexplained automated outcome is unacceptable to the individual, the regulator, or, increasingly, the courts.

The ICO’s guidance on explaining decisions made with AI is explicit: organisations must provide meaningful explanations of AI-assisted decisions — not technical descriptions of model architecture, but clear, accessible reasoning that the affected individual can understand and act on.

Explainability must be designed into the system from the start. A model that was never built to explain itself cannot be made explainable after the fact. By the time a regulator or a customer asks the question, it is already too late to go back to the drawing board.

4: Accountability — Make Sure Someone Owns What Your AI Does

The most common governance failure in AI is not technical. It is organisational. Accountability gets distributed so widely across teams, vendors, and departments that when a system produces a harmful outcome, it effectively belongs to no one. No named owner. No escalation path. No correction process.

This is not a theoretical risk. It is the default state of most AI deployments that have not been deliberately designed otherwise. Every AI system needs a named human owner — someone responsible for its outputs, its performance, and what happens when it gets something wrong. That ownership must be established before deployment, documented, and visible to everyone who interacts with the system.

The commercial pressure to get this right is already arriving. 87% of senior UK business leaders say their customers are increasingly asking how their AI is governed and who is accountable when something goes wrong. Meanwhile, enterprises where senior leadership actively shapes AI governance achieve significantly greater business value than those that delegate it to technical teams alone.

Accountability is not a governance document. It is a decision made on day one about who is responsible — and it has to be a real answer, not an organisational chart.

5: Safety — Test for the Real World, Not Just the Lab

An AI system that performs well in a controlled environment and fails in the real world is not a safe system. It is an untested one. Real-world conditions include edge cases, unexpected inputs, and users behaving in ways the development team never anticipated. A system that was not built and tested for those conditions will encounter them anyway — the only question is whether the organisation finds out first or the public does.

Safety in responsible AI is not a pre-launch sign-off. It is an ongoing operational discipline. The UK Government’s AI White Paper is explicit: AI systems must function in a robust, secure, and safe way throughout their entire lifecycle, with risks continually identified, assessed, and managed — not signed off at launch and forgotten.

The stakes of neglecting this are rising as AI becomes more autonomous. Only one in five companies currently has a mature governance model for autonomous AI agents — systems that not only produce outputs but also take actions. As the House of Lords Library warned in January 2026, passive loss of control can occur when AI decisions become too opaque or too fast for meaningful human oversight.

Safety is not a feature. It is a commitment to continuous monitoring that begins at deployment and never ends.

6: Privacy — Treat the Data Behind Your AI as Carefully as the AI Itself

AI systems are data-hungry by design. The data used to train, run, and improve them is often personal, sensitive, or commercially confidential. Most organisations focus their data governance efforts on the outputs of their AI systems. Far fewer apply the same rigor to what goes in — and that is where the exposure sits.

A trained AI model does not just use data. It encodes it. Information about the individuals whose data was used to build the system can be inadvertently preserved inside the model itself — and extracted by someone who knows how to look. Data privacy in a responsible AI context is not just a legal obligation under UK GDPR. It is a structural requirement that must be addressed at the point of data collection, model training, and deployment.

The business community is already feeling this. 45% of UK businesses cite data security concerns as a barrier to fully scaling AI — not just adopting it, but scaling it. That figure reflects organisations that have started the journey and hit the wall that insufficient data governance builds.

Privacy is not an IT function to be resolved separately from the AI development process. It is part of the development process — and it must be treated as such from day one.

7: Human Oversight — Keep People in Control of Decisions That Matter

Removing human oversight from consequential AI decisions is not an efficiency gain. It is a liability. The more reliable an AI system appears, the stronger the temptation to reduce human review — and the stronger the risk that errors go undetected, unchallenged, and unresolved until they cause harm that cannot be quietly corrected.

This is not a hypothetical. A freedom of information request revealed that an AI system used by the UK Government to assess universal credit claims was producing errors at a statistically significant rate — a finding confirmed by a fairness analysis in February 2024. For claimants in financial difficulty, an automated error is not an inconvenience. It can mean missed rent or going without food while waiting weeks for a human review to correct what a machine got wrong.

84% of UK businesses currently using AI report applying at least some human input or checking to AI outputs. But there is a significant difference between meaningful oversight and procedural oversight. A human who rubber-stamps every AI output without genuinely reviewing it is not providing oversight. They are providing cover.

Human oversight means building systems in which people retain genuine control over decisions that matter — not as a formality, but as a safeguard designed, documented, and enforced from the moment the system goes live.

Conclusion

The UK is moving fast on AI. But speed without the right foundations does not build competitive advantage — it builds risk. The businesses that will lead are not the ones that deployed AI first. They are the ones that deployed it responsibly, with fairness, transparency, explainability, accountability, safety, privacy, and human oversight built in from day one.

Responsible AI is not a future concern. The consequences of ignoring it are already documented, already public, and already attached to real UK organisations.

At QuantumXL, we build AI systems that are production-ready, explainable, and governed from the ground up — not retrofitted with responsibility after the fact. If you are building AI and want to get it right from the start, let’s talk.